Collaborative drones for long-range perception

Friday, February 27, 2026

One way to build aerial drones that have the ability to perceive depth is to emulate the way humans and other animals solve this problem – with stereo vision.

Two cameras that act like eyes are mounted on a drone, capturing slightly different viewpoints. An algorithm interprets the differences between the viewpoints and generates a sense of depth. It’s an efficient approach that doesn’t require intensive power consumption that comes with an active sensing method like LiDAR.

But stereo vision has a fundamental limitation: the perception range of commercial stereo camera systems is a function of the distance between the two cameras and is therefore limited. This is because the angular difference between two viewpoints, known as parallax, shrinks as the distance in a scene increases. This means that a drone with stereo cameras mounted 10 centimeters apart will be unable to perceive depth beyond 20 meters or so. That distance is insufficient for a fast-moving drone that must navigate complex environments and respond rapidly to dynamic objects.

Researchers have proposed extending the baseline – for instance, by placing cameras on opposite wings of a fixed-wing drone. Yet this approach fundamentally depends on large platform dimensions and cannot be implemented on small drones due to strict physical scale constraints.

MBZUAI Assistant Professor of Robotics Xingxing Zuo and his collaborators, Zhaoying Wang and Wei Dong from Shanghai Jiao Tong University, have come up with a creative approach to address this problem. If one drone isn’t big enough, why not use two?

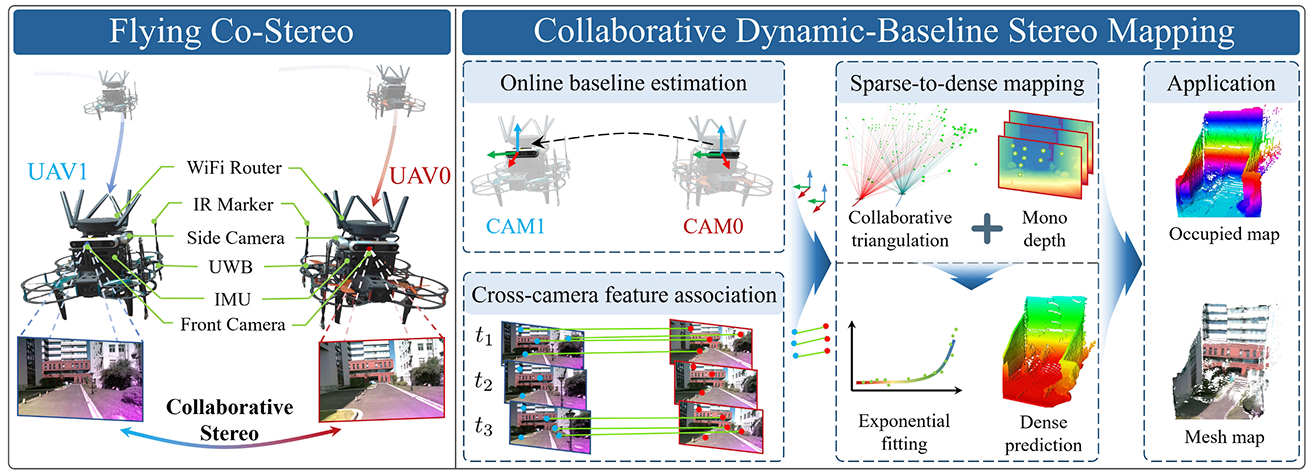

Instead of making the stereo baseline bigger on one drone, they instead separate the cameras on two drones that fly in formation. With cameras three meters apart instead of 10 centimeters, the system, called Flying Collaborative Stereo (Flying Co-stereo), can accurately perceive depth at up to 70 meters, an improvement of 350% compared to widely deployed commercial RealSense stereo cameras.

“They’re collaborative drones that have long-range depth perception using stereo vision to determine metric scale,” Zuo says.

But to make it work, Zuo and his collaborators had to solve several technical challenges. They recently published a study on the topic in IEEE Transactions on Robotics.

Challenges of a dynamic baseline

Since traditional stereo cameras are fixed in place, the distance and orientation of the cameras is known and don’t change. But when two cameras are placed on drones that fly independently, the baseline changes continually, if only slightly, as the drones move through space. To make sense of the data captured by the cameras, the relative position of the drones needs to be precisely determined down to the millimeter. And this needs to be done in real-time, with limited on-board computational power and communication bandwidth.

Zuo and his collaborators’ solution is a framework they call collaborative dynamic-baseline stereo mapping (CDBSM) and it has three components.

First, the drones are equipped with side-facing cameras and infrared sensors that monitor their wingman. The researchers describe it as a dual-spectrum visual-inertial-ranging estimator (DS-VIRE) that fuses visible and infrared data with ultra-wideband range measurements to give an accurate estimation of the baseline.

The second component uses front-facing cameras to track objects in the scene. The two drones need to identify the same visual features, like corners of buildings, in both of their camera views to calculate depth. Called “feature matching,” this is challenging because the drones are looking from different angles that keep changing as they fly.

There are powerful AI-based systems called SuperPoint and SuperGlue that can track and match features even when viewpoints are different. But these algorithms run too slowly on the on-board hardware to analyze every frame of video.

The researchers developed a hybrid approach to solve for this limitation. They run SuperPoint and SuperGlue intermittently. Between these periodic matches they use a much faster, simpler method called optical flow that tracks features forward in time. SuperPoint and SuperGlue provide periodic guidance while optical flow provides fast frame-to-frame prediction.

This video describes Flying Co-stereo, a system that uses stereo cameras mounted on two drones to perceive depth at long distance.

The system now knows where the two drones are in relation to each other and what visual features both drones see, but these observations are in 2D and have no information about depth.

The third component of the framework makes the translation from 2D to 3D. It uses standard stereo vision geometry to calculate the precise 3D position of a set of sparse landmarks in a scene. They are referred to as “sparse landmarks” because there are no more than 200 of them in an image with more than three hundred thousand pixels.

Depth predictions at this point are still relative. The system might say that one landmark is closer than another, but it can’t tell by how many meters. The researchers then turn to an AI system called DepthAnythingV2 to translate these up-to-scale depth predictions into metric distances.

As they note in the study, the result is that the system generates dense 3D mapping at up to 70 meters with a relative error rate between 2.3% and 9.7%. This capability exceeds conventional stereo cameras by up to 350% in perception range and 450% in coverage area.

Architecture of Flying Co-Stereo within the researchers’ proposed Collaborative Dynamic-Baseline Stereo Mapping framework.

The researchers also investigated the selection of an optimal baseline length to minimize the overall landmark triangulation error. While a longer baseline reduces the triangulation condition number, thereby improving numerical stability and precision, it simultaneously increases the inter-UAV visual observation distance. This, in turn, degrades the accuracy of baseline estimation between collaborative drones.

Drones of today and tomorrow

Zuo says that there are many interesting applications for Flying Co-stereo in fields such as logistics and search and rescue. The system can also support detailed mapping of complex urban environments and proactive planning for long-range operational scenarios.

But the potential of aerial drones goes beyond flying through spaces in their journey to get from one place to another.

In the future, Zuo says, researchers will build autonomous drones that not only fly through environments but interact with them. “We will want drones to be able to grasp and move things around in the world, and for this kind of manipulation it’s necessary to have an accurate 3D understanding of the world.”

Related

Commencement 2026: Hard work and soft robotics

From online courses to advanced research, Ibrahim Alsarraj’s rapid rise mirrors the growth of MBZUAI’s robotics department,.....

- research ,

- robotics ,

- alumni ,

- graduate ,

- Commencement 2026 ,

- Ph.D. ,

- commencement ,

- M.Sc. ,

Commencement 2026: Advancing safer robotics in the real world

As AI drives faster, more autonomous machines, MBZUAI's Salamah Almazrouei focuses on the safety systems that keep.....

- Commencement 2026 ,

- master's ,

- commencement ,

- Safety ,

- Ph.D. ,

- alumni ,

- robotics ,

Creativity on campus: cultivating innovation at MBZUAI

On World Creativity and Innovation Day, we look at what creativity means at MBZUAI, how it is.....

- creativity ,

- incubation and entrepreneurship center ,

- Teaching ,

- innovation ,

- campus ,

- mentorship ,

- IEC ,

- faculty ,