Award-winning robotic fish take deep learning below the surface

Monday, March 16, 2026

Autonomous underwater robots that can go where human divers can’t and gather information about marine environments hold the potential to provide great benefits to marine biologists and other researchers who study the ocean.

But operating in the sea poses several challenges, even to robots. Communication is difficult and commonly-used robotic control and localization methods don’t work below the surface. In addition, conventional underwater robotic vehicles often produce noise with their propulsion and communications systems that can travel long distances, disturbing the ecosystems they’re meant to observe.

Three researchers based in Abu Dhabi – MBZUAI Professor of Robotics Cesare Stefanini, Federico Renda of Khalifa University, and Giulia De Masi of Sorbonne University Abu Dhabi – were recently recognized with the Sheikh Hamdan bin Zayed Award for Environmental Research for developing a system of underwater robotic vehicles to address these challenges.

Called H-SURF (heterogeneous swarm of underwater robotic fish), the system is a school of collaborative robotic fish that can swim, communicate, and gather information about aquatic environments, all without human guidance.

The research was funded by the Technology Innovation Institute (TII) and was conducted at Khalifa University. Stefanini, Renda, and De Masi are principal investigators for the project and Stefanini’s role was to conceive and lead the development of the underwater robots.

A convergence of innovation

What makes H-SURF innovative isn’t one single technological advance, but the convergence of innovations in different fields that makes their approach possible.

The first is bioinspired robotics. The H-SURF robots themselves are shaped like fish, with streamlined bodies, fins, and propellers that produce fluid, natural looking movement, all while providing the ability to dive, surface, and avoid obstacles. “If you want to interact with life, you need to understand it,” Stefanini says. “In the ocean, for example, you can’t create unnecessary noise, or you will affect marine life.”

The robots can communicate with each other using light as an alternative to sound, which reduces the overall noise of the system and is a nod to certain species of fish.

Design of the H-SURF robots that act collectively as a swarm.

Stefanini says that nature isn’t always the best source for inspiration to solve design challenges – for example, airplanes don’t exist in nature. But biology is valuable as a model when designing robotic systems for complex environments, because over the course of millions of years, organisms have developed efficient ways to navigate complexity.

The second innovation relates to swarm intelligence. The robots are guided by what are known as swarm control algorithms, which allow them to move collectively as one unit. The movement of a robot in the school isn’t determined by its own location or by a guide robot, but by its relative position to other robots in the swarm.

As the researchers explain in a study about H-SURF, this approach is computationally efficient and requires minimal data to be shared between the robots. “Even with simple onboard code and computation, the swarm can carry out complex collective tasks, and the robots behave like one large robot acting together,” Stefanini says.

The researchers have also developed a multi-agent reinforcement learning model and found that when they act collaboratively, the robots developed approaches to inspect an underwater pipeline faster than a single robotic fish could by working alone.

Eyes in the water

The third element is embedded computer vision, and this is the focus of the team’s most recent study on H-SURF. The researchers equipped the H-SURF robots with a lightweight convolutional neural network (CNN) that uses the robots’ front- and side-facing cameras to identify marine species in real-time without connections to external servers. (The neural network also aids coordination with other robots in the swarm.)

The model is based on a recent version of YOLO11n and can quickly and efficiently process images in under 7 milliseconds – averaging 0.9 milliseconds for preprocessing, 4.6 milliseconds for inference, and 1.4 milliseconds for postprocessing per image. Speed is important because both the robot and marine life are moving and visibility is often limited in real-world applications.

The model runs locally on an onboard Raspberry Pi, a processor that costs a fraction of what industrial computing hardware costs. “We wanted to focus more on swarm intelligence than super intelligence of any individual nodes in the swarm,” Stefanini says. Even with this comparatively limited computational power, the robots can classify organisms, analyze their behavior, detect anomalies, estimate the number of a particular species, and determine the age of the creatures it encounters.

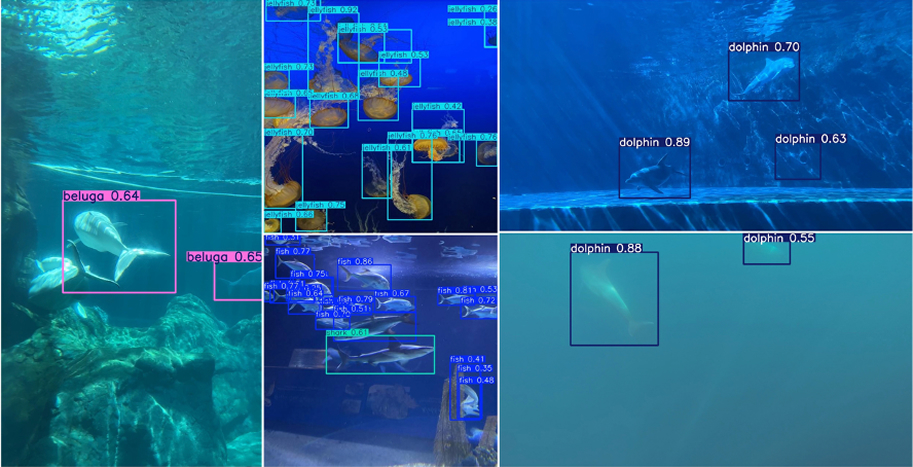

The researchers trained the model to identify six classes of marine animals: sharks, rays, jellyfish, dolphins, belugas, and generic “fish.” These classes were chosen intentionally to test the performance of the system as some of them can look similar underwater.

To train the model, the researchers used two open-access datasets known as Roboflow Aquarium and Google’s Open Images Dataset V7. They complemented this dataset with their own images captured at aquariums in Italy and the US and with images of dolphins collected as part of the UAE Dolphin Project Initiative, which is dedicated to the monitoring and conservation of coastal dolphin populations in the UAE. Applications called Roboflow and Makesense are used to manage the data pipeline and help with annotation and image resizing. The researchers also manually verified labels in the dataset.

H-SURF predictions on sample images from the test set, as published in a recent study.

In total, the dataset included more than 2,000 images that were split into training (82%), validation (12%), and test (6%) sets. As the researchers note in their most recent study, H-SURF demonstrated good performance classifying marine life, with a mean average precision of 0.78. The researchers note that the combination of an accurate neural network with an autonomous robotic platform “allows for scalable, in-situ biodiversity assessment and long-term observation missions in marine ecosystems without requiring constant human supervision.”

Power and possibility

The researchers will continue to improve H-SURF. One of its current limitations relates to energy consumption. Currently, computation uses more energy than locomotion: “the brain is much less power hungry compared to what we can do in computers,” Stefanini says. While the current version of H-SURF can function for four to five hours, after that the robots need to be recharged.

With H-SURF, Stefanini and his collaborators have established a platform that others can build on by adding to the dataset, with the goal of supporting additional applications in environmental conservation and fishery management. In addition, Stefanini hopes that an incoming doctoral student at MBZUAI could contribute to the next phase of development of the system, in collaboration with the institutions which supported the initial project. But even as it stands now, H-SURF is an important step in the development of non-invasive, autonomous systems that can monitor marine environments, many of which are under threat.

- robotics ,

- partnership ,

- award ,

- environment ,

- marine ,

- collaboration ,

Related

MBZUAI team awarded Google Academic Research Award to study loneliness in the age of AI

The project, led by Thamar Solorio, Monojit Choudhury, and Aseem Srivastava, will study loneliness in digital spaces.....

- social good ,

- loneliness ,

- GARA ,

- Google ,

- award ,

- nlp ,

- research ,

- natural language processing ,

MBZUAI launches Ruwwad AI Scholars Fellowship to build UAE’s next generation of AI faculty

The program will prepare Emirati Ph.D. graduates for future faculty careers by offering funded fellowships at leading.....

Read MoreUAE to deploy 8 exaflop supercomputer in India to strengthen local sovereign AI infrastructure

MBZUAI will partner with G42, Cerebras, and India’s Centre for Development of Advanced Computing to deliver the.....

- sovereign ,

- partnership ,

- summit ,

- collaboration ,

- supercomputer ,